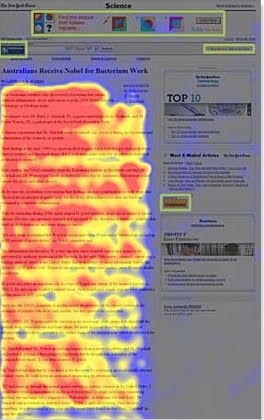

Many website designers have heard of a concept called “banner blindness.” This is the tendency for people to fail to incorporate some screen elements into their understanding of a site, often those on the right side of the page. This phenomenon dates back at least as far as a paper from 1998 by Benway and Lane at Rice University. Ironically, banner blindness is often induced, particularly when designers make special effort to try to draw the user’s attention to these objects. They put a box around them, put them in a different background color, or use one of several other visual styling techniques to try to tell us they’re important. And they double their efforts when testing shows people seem to miss them. These elements don’t even have to be at the sides of screens. It can occur with elements at the bottom and, yes, even at the top of screens.

This phenomenon is often mistakenly described as users failing to look at these objects. But this lack of attention to these objects is effortful. Users actively avoid looking at them, so that explanation can’t be correct. To understand banner blindness, you need to understand the two elements that make up our vision (central vision and peripheral vision) and how they interact.

This eyetracking heatmap is

copyrighted to Nielsen Norman Group.

© Nielsen Norman Group. All rights reserved.

Within the approximate center of each eye is a small (about 5mm), yellowish region called the macula. Contained within the macula is the fovea–an area of highly packed photoreceptor cells. This area of packed cells provides us with high acuity, color information. The central vision is a visual field of approximately 13°, so to see an object with our fovea requires us to look directly at the object. And this means that the central vision usually corresponds to our attentional focus, since we move our eyes to cause the object of our attention to fall within this region of our optical system if we want detailed information about it.

However, outside the macula is a much larger area of cells that comprise our peripheral vision. This area of the eye, having far fewer photoreceptors, produces information of significantly lower acuity and cannot detect colors, but is much better at detecting other information such as movement and particularly for detecting low level, black and white information. (Yes, you may not believe it, but things in your peripheral vision are actually black and white. But that’s a topic for another day.) The peripheral vision extends the field of view from approximately 13° to approximately 180°.

We often don’t realize it, but we “see” lots of objects in our peripheral vision. To prove this to yourself, stare straight ahead. Move one of your hands to the side of your head like you’re waving to someone or being held up in a robbery (on one side at least). Point your finger to the sky. Now move your finger to point forward and then back to the sky, all while still looking straight ahead. Chances are you saw your finger move even without changing your gaze.

Many people are trained to process the objects within their peripheral system. Astronomers are taught to use this area of their vision to detect faint objects more clearly. A dim star, for instance, is best seen when your eyes are not aimed directly at it. Similarly, anyone who plays basketball, soccer, hockey, or any other sport involving passing between its team members learns to make a “blind pass” by passing to a player they are not looking at directly. (Otherwise, the pass is known as being “telegraphed.”) But the bulk of peripheral vision processing is done at an unconscious level. In fact, these two types of vision (central and peripheral) work together. Our peripheral vision helps us decide if we need to look at something or can ignore it.

Think of it this way: as early primates, if we saw a friendly fellow primate in our peripheral vision we would not need to look at it and could continue what we were doing, but if we thought it might be a predator we would want to look over at it to determine if we’re in danger. (Or at least those primates that did do this tended to survive to pass this trait along.) If you read the March Newsletter about the limitation of attention, you’ll see how this can be a valuable role for our peripheral system. Since we have such limited attention, our peripheral vision attempts to keep us from being distracted by all the things going on around us.

In modern times, lacking the need to make that type of danger decision, if we see an object that we know (or assume we know) is irrelevant to what we’re doing, we continue to focus on the objects currently within our central vision and do not redirect our eye’s central focus to that peripheral object. So when users encounter an object with special formatting, our minds tell us it’s probably an ad, or some visual “eye candy.” At the least, being boxed off, it must be something separate from what we’re currently doing. We don’t ignore it. We perceive the object in our periphery, process the perception, and determine it to be a distraction from what we’re doing. We then actively avoid looking at it. It’s not that these objects are hidden from our vision, or that we don’t notice them. They’re just formatted in such a way that we decide they are not important.